In the first post, we setup an Azure Analysis Services (AAS) instance and an On-Prem Gateway. In the second post, we built a model using SQL Server Data Tools and deployed it to AAS. In this post, we’ll cover the process to setup a scheduled refresh of the AAS model using the On-Prem Gateway, and setting up diagnostic monitoring.

First, let’s look at automating the processing of the model.

Process the Model

Rather than walk through this process step by step, I’ll point you to the excellent blog post by Jorg Klein. He uses a Logic App and Azure Data Factory to automate the processing. What follows is a summary of his steps.

App Registrations

The first step involves creating a new App Registration for Azure Analysis Services in your Azure Active Directory, to enable integration with the Microsoft Identity platform for secure service communication.

Logic Apps

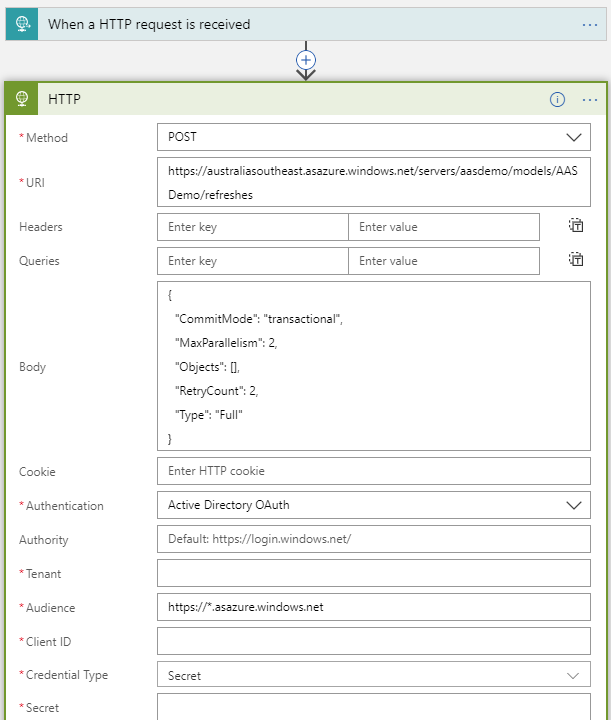

Once the App Registration is setup, we create a Logic App which is the component that calls the AAS process function using its REST API.

Azure Data Factory

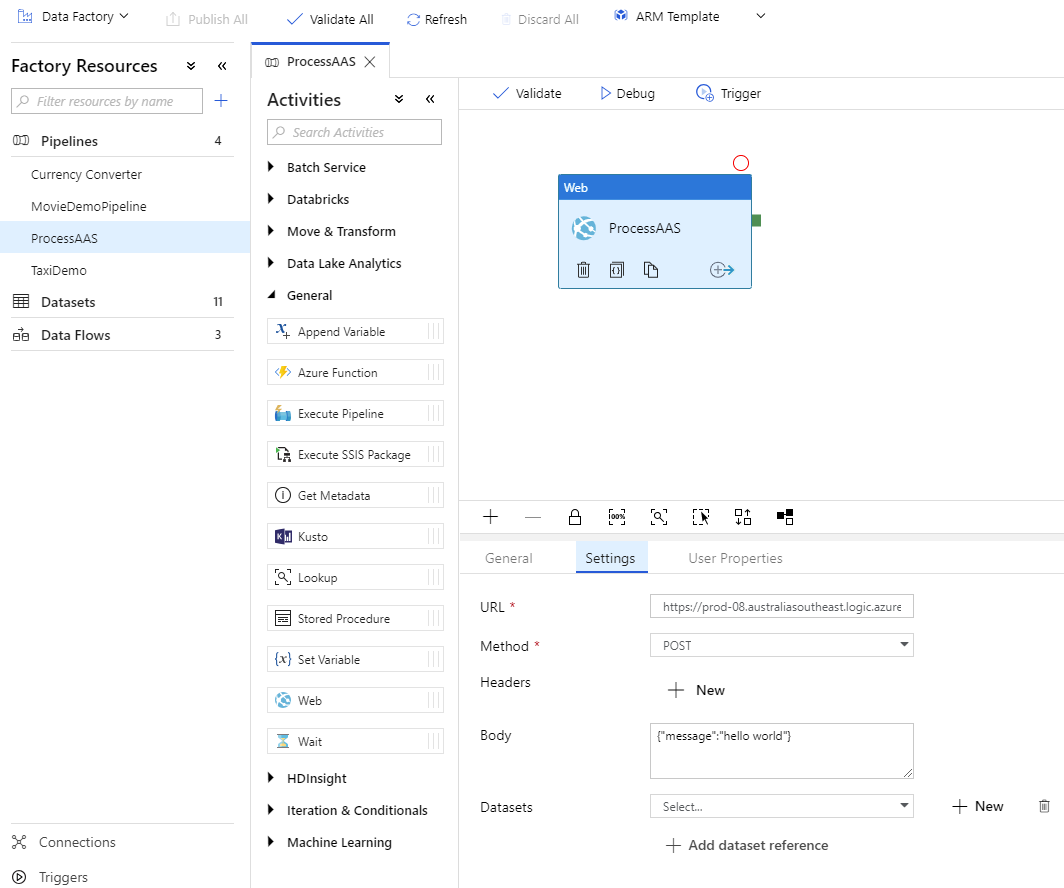

Once the Logic App is created, we schedule it using an Azure Data Factory v2 Pipeline, using a Web task, as shown below. The URL for the step is obtained from the Logic App step.

Finally, to schedule the Data Factory Pipeline, create a Trigger with the appropriate schedule, and associate it with the pipeline. I’ll cover this process in more detail in a future blog post.

As above, the blog post by Jorg Klein covers these steps in detail.

That’s it! At this point, you should have a fully automated model hosted in Azure Analysis Services using your On-Premises Gateway. Note that there are no specific steps required in Azure Analysis Services to setup Data Sources for the Gateway, like you need to do in Power BI. The data source details are contained in the model, and as long as the On-Prem Gateway can a) see it, and b) has access to it, you’re good to go.

Open in Power BI

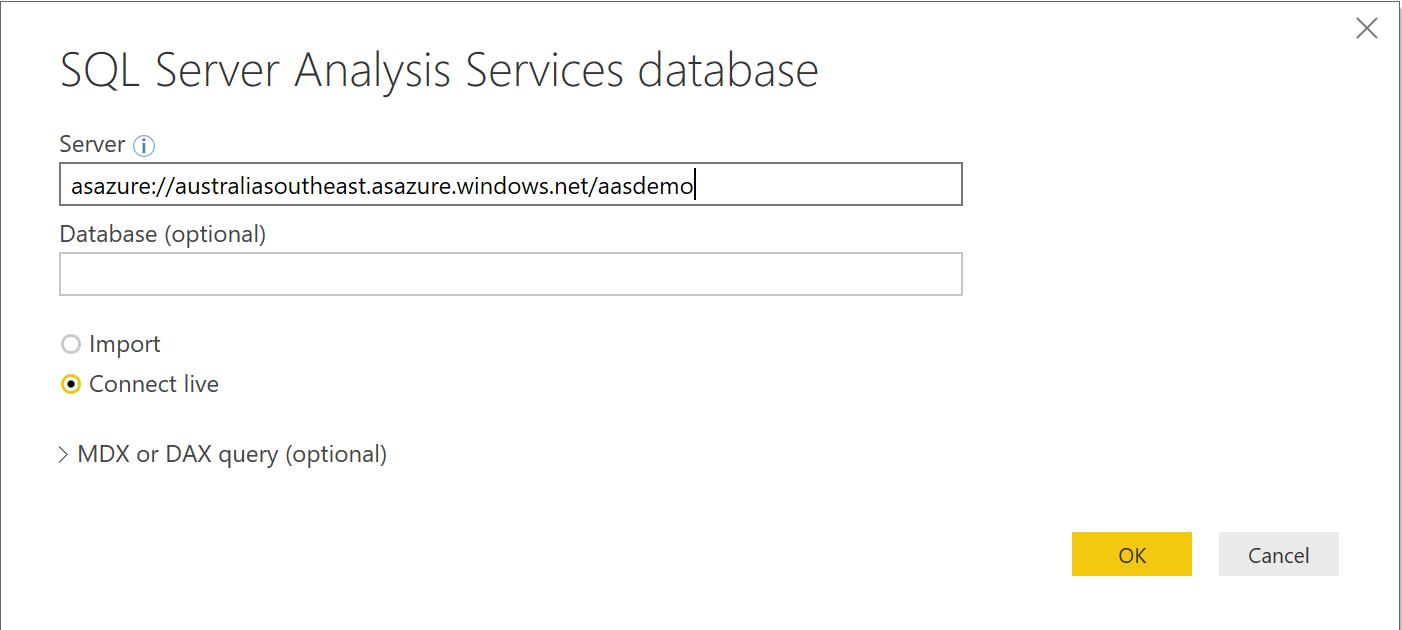

Now that you’ve got the data pipeline automation sorted, open Power BI desktop and connect to your model to create some dashboards. Simply choose Azure Analysis Services as the data source type and enter the server name, which you can get from the Azure Portal.

Diagnostic Logging with Log Analytics

Once the processing is automated, you’ll want to automate diagnostic logging and alerts to ensure any processing errors are captured with the appropriate notifications. To do this, we’ll use Azure Log Analytics. Today, there’s no way to enable metrics logging for PaaS services through the Azure portal, so we’ll need to use PowerShell.

The process to enable logging for Azure Analysis Services is covered well in this blog post by Chris Schmidt but I found I needed to edit the Powershell script to get it working;

- Manually download the powershell script from here. Unzip the script into a file on your machine

- Edit the powershell script and replace “Find-AzureRMResource” with “Get-AzureRMResource”

Then follow the rest of the blog post. This will enable the diagnostic logs from Azure Analysis Services to be ingested into log analytics.

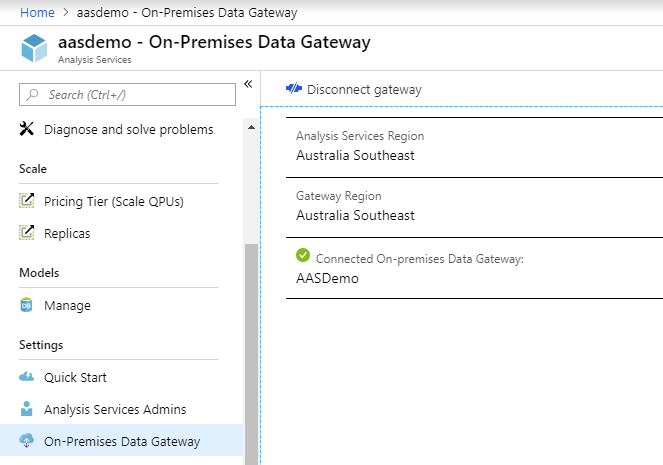

To generate a processing error, I’ve disconnected my On-Premises gateway from Azure Analysis Services. This will force an error during processing, as AAS won’t be able to connect to the SQL Server to refresh the model. You can disconnect the Gateway in the Azure portal by selecting the On-Premises blade of your AAS instance and choosing Disconnect gateway, shown below. To force a processing error, re-run the Data Factory Job.

To view the error, open your log analytics service, click on the Logs blade and run the query shown below, which filters the diagnostics for errors. In this case, you can see the error about needing an On-Premises Gateway to access the source data to process the model

Optionally, you can click + New alert rule to have emails and/or Text messages sent when these errors occur.

That completes this series of posts. I hope this has helped, and feel free to reach out through comments below!